How Dynamic Gaussian Avatars are rewriting what's possible in real-time character rendering — and why it matters for everything Digito builds.

Something fundamental is shifting in how digital humans are built. Not at the level of art direction or storytelling — at the level of geometry itself.

For years the dominant logic was clear: polygons, rigs, blend shapes. A mesh with thousands of carefully sculpted vertices, driven by bones, driven by code. Beautiful when it works. Brittle when it doesn't. And always, always, slow to produce.

A new family of techniques is changing that equation. 3D Gaussian Splatting — and its extension into dynamic, animatable avatars — represents one of the most significant shifts in real-time character rendering in a generation. At Digito, we've been watching it closely. Here's what it actually is, why it matters, and where it fits inside our pipeline.

What is 3D Gaussian Splatting

Traditional 3D representations work with surfaces — explicit geometry that tells the renderer where an object's face, edge, or vertex is. NeRF (Neural Radiance Fields) pushed toward something different: implicit representations learned from photographs, where a neural network describes the world as a continuous function of space.

3D Gaussian Splatting is the third path. Instead of surfaces or neural functions, it represents a scene as millions of tiny ellipsoids — "Gaussian splats" — each one defined by position, size, orientation, opacity, and color. Together they form a probabilistic cloud that, when rendered from any angle, produces photorealistic images at real-time speeds.

"The question isn't whether Gaussians can match polygon fidelity. It's whether they can exceed what polygons ever allowed."

The key insight is that this representation can be learned directly from a set of photographs using Structure from Motion. You shoot a real person from many angles, run them through a reconstruction pipeline, and the output isn't a mesh — it's a trained cloud of splats that can render that person from any viewpoint, at real-time framerates, with a visual quality that polygons struggle to touch.

How it works

Photos are processed through COLMAP or RealityCapture to extract camera poses and a sparse point cloud. This seeds an optimization loop that places and tunes millions of Gaussian splats — adjusting their position, scale, rotation, opacity, and spherical harmonic color — until they reproduce the input images as closely as possible. The result is a "splat file" that renders in real time with a rasterization-based renderer.

The dynamic leap

Static 3DGS is impressive. Buildings, products, environments — anything you can photograph from enough angles comes out looking uncanny. But digital humans aren't static objects. They talk. They blink. They tilt their heads. The real challenge — and the real frontier — is making Gaussians dynamic.

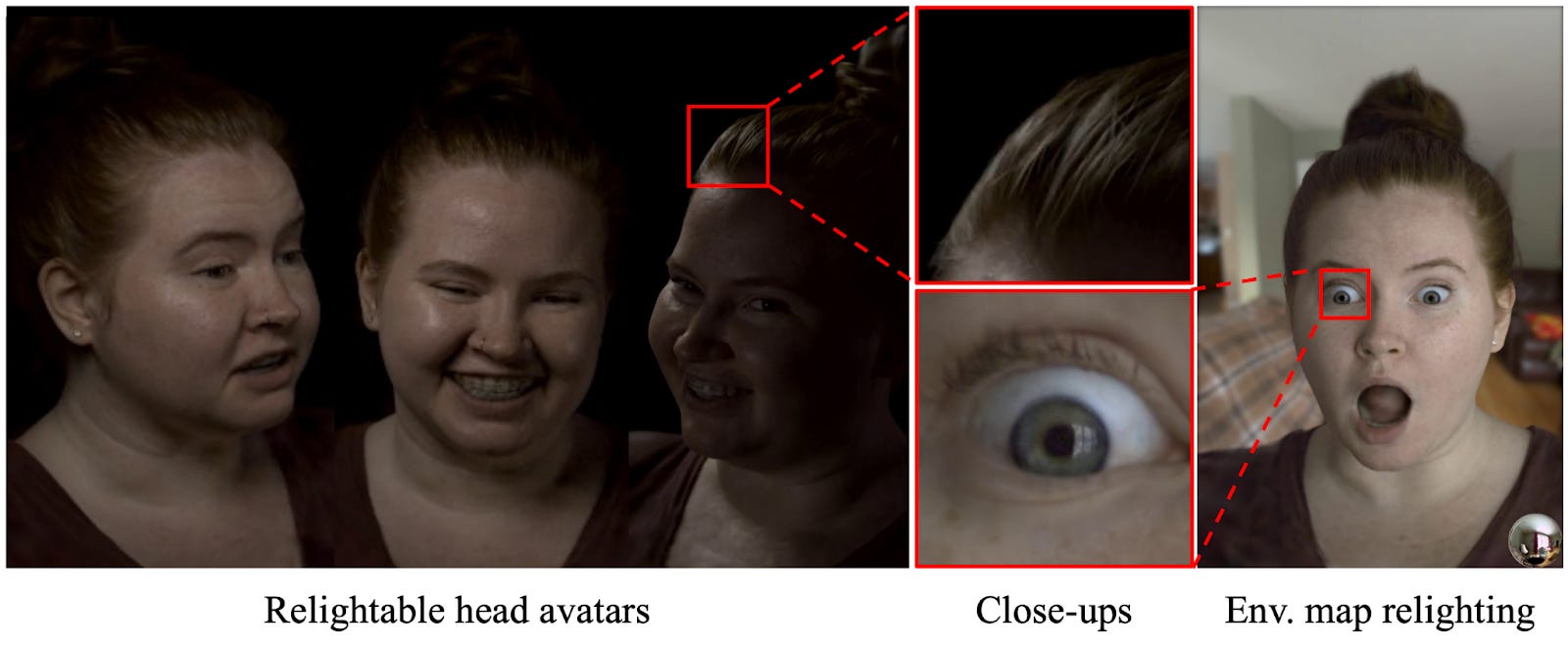

Dynamic Gaussian Avatars solve this by learning to deform the Gaussian cloud over time. Instead of a fixed set of splats, the system learns a canonical representation plus a deformation field. Given an expression code or a driving signal (audio, video, motion capture), it can morph the cloud in real time to match — producing a photo-realistic animated avatar driven purely by data, with no rig, no blend shapes, no traditional animation pipeline at all.

Several research directions are making this practical:

ApproachHow it drives the avatarReal-time ready3DGS + deformation fieldLearned warp of canonical Gaussians per frame✓FLAME-guided 3DGSGaussian cloud bound to a parametric face model✓4D Gaussian SplattingTime as a fourth dimension — full sequence modeling~Audio-driven Gaussian avatarsDirect audio-to-deformation mapping✓

What makes this remarkable isn't just the visual quality. It's what disappears from the pipeline. No retopology. No UV unwrapping. No rig. No blend shape authoring. No ZBrush sculpt for secondary expressions. The data does the work that artists used to spend months on.

Digito perspective

Digito's current pipeline runs on Unreal Engine with MetaHuman-grade geometry, fully rigged characters, LLM-powered conversation, and ElevenLabs voice. It produces digital humans that are genuinely cinematic — the kind of close-up that makes people question what they're looking at.

Dynamic Gaussians don't replace that pipeline. They extend it.

1. Photoscan capture session

Shoot a real person or approved likeness from 50–200 angles using calibrated multi-camera rigs or structured-light scanning. This is the raw material Gaussian optimization needs.

2. Gaussian reconstruction

Run capture data through SfM (RealityCapture or COLMAP) to extract camera poses, then train the Gaussian representation. Output: a splat file that renders the subject photorealistically from any angle.

3. Dynamic deformation training

Train a deformation field on a set of expressions and head poses, using audio or motion capture as driving signals. This is where the static splat becomes a living, talking avatar.

4. UE integration + AI brain

Embed the rendered Gaussian stream inside Unreal Engine alongside our LLM conversational layer, ElevenLabs TTS, and the Digito portal for client configuration. The character thinks, speaks, and responds in real time.

The most immediate application is licensed likeness work — situations where a client needs to recreate a real person with maximum fidelity and minimum artist time. The Gaussian approach captures what sculpting can't: sub-millimeter skin texture, accurate subsurface scattering, natural micro-expressions baked directly from captured data.

"The skin you see is real skin. Captured, not sculpted. That's a line Unreal Engine geometry hasn't crossed yet."

For brand ambassadors and celebrity digital twins — a growing category among luxury, automotive, and entertainment clients — this changes the conversation entirely. Instead of months of artist time approximating a likeness, you capture it in a day. The quality ceiling goes up. The production time comes down.

Honest limits

This would be a thinner article if we pretended the technology is solved. It isn't. There are real constraints that inform how we position it.

Body and clothing remain difficult. Current dynamic Gaussian systems work well for head and face — the region with the most training data and the most mature deformation models. Full-body avatars with complex clothing simulation are still a research frontier, not a production pipeline.

Editability is limited. A trained Gaussian model is hard to art-direct after the fact. You can't tweak a jawline or fix a skin tone the way you'd adjust a sculpt. If the capture data has problems, the reconstruction reflects them. The pipeline front-loads quality control in a way traditional production doesn't.

Integration into Unreal Engine is still emerging. Plugins exist, but the tooling isn't as mature as MetaHuman workflows. For clients who need custom animation, interactive rig access, or complex scene compositing, the polygon pipeline still wins on flexibility.

And rendering costs scale differently. A scene with ten Gaussian avatars behaves very differently from a scene with ten MetaHumans. GPU memory requirements are significant and need to be planned into deployment architecture.

The bottom line

The digital human space is converging on a future where multiple representations coexist. Polygon rigs for controllability and flexibility. Neural avatars for photorealism from capture. Gaussian splats for the uncanny territory in between — the close-up where every pore matters, the likeness where every millimeter counts.

At Digito, we're not betting the studio on any single approach. We're building the fluency to deploy whichever representation serves the client's brief — and increasingly, that's going to mean Dynamic Gaussian Avatars as part of a hybrid pipeline that no single technology provider is offering today.

The face is still the hardest problem in real-time rendering. We've been working on it for years. This is just the next chapter.

April 12, 2026

Marlon R. Nunez